The future we build could depend on research we don’t read

Policy is made on evidence. Except it isn't — not really.

Somewhere in the process, an adviser is reading a shortlist of papers, knowing it will shape the decision, but not knowing what’s missing.

The research that could have changed the outcome might never make it into the room.

Academics call this the evidence-policy gap, documented across health, education, and public policy for decades.

That gap can be closed.

Policy is made on evidence. Except it isn't — not really.

Somewhere in the process, an adviser is reading a shortlist of papers, knowing it will shape the decision, but not knowing what’s missing.

The research that could have changed the outcome might never make it into the room.

Academics call this the evidence-policy gap, documented across health, education, and public policy for decades.

That gap can be closed.

Most research never reaches policy

Basil Mahfouz has spent years mapping exactly this gap. As an early career fellow at UCL he built a model, supported by Elsevier datasets, examining how likely any given paper is to be cited in policy. When he compared predicted relevance against what policymakers actually read, the pattern was stark.

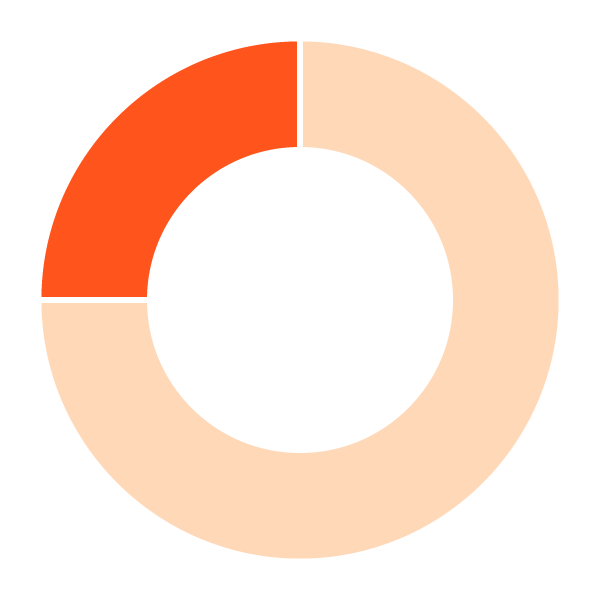

Roughly two-thirds of science his model flags as highly relevant is never cited. Not because it's weak. Because it's invisible.

"Policy makers are really drawing on a very narrow slice of the available evidence," he says.

What drives the narrow slice? Networks. Media visibility. Prestige markers. The signals academia uses to define excellence — citation counts, journal rankings — do not align cleanly with the research that drives policy. The system filters by familiarity rather than relevance.

That matters, because it determines which science shapes real-world decisions.

Roughly two-thirds of science his model flags as highly relevant is never cited. Not because it's weak. Because it's invisible.

The demand for impact is rising

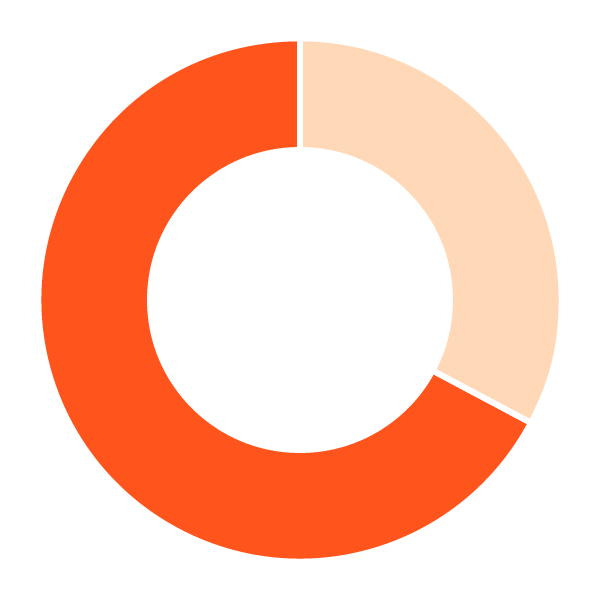

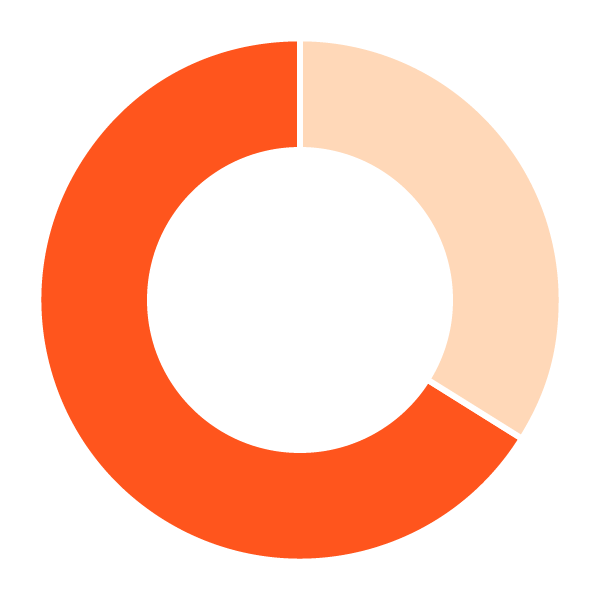

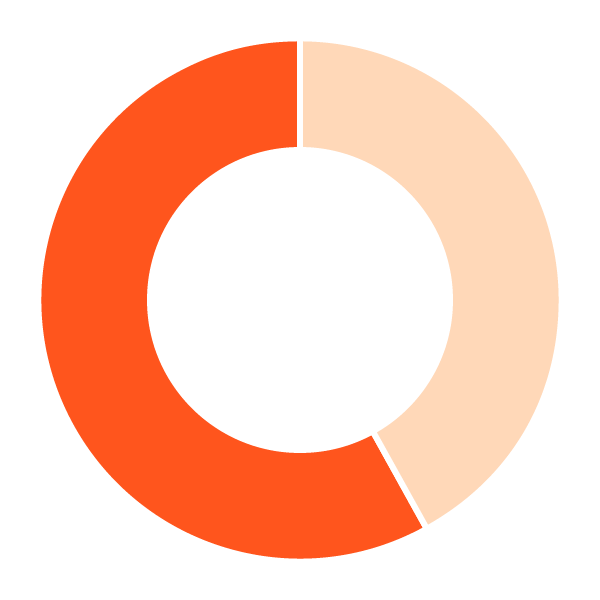

67%

say research is becoming more mission-oriented

50%

say it must deliver real-world benefit

66%

are already engaging broader audiences

Source: Researcher of the Future

AI can widen the view – but not by default

AI could widen the field of view. Mahfouz believes that. But he's precise about the challenge.

When an AI selects 25 papers from hundreds of thousands of possible results, the critical question isn't whether it can summarize them accurately. It's whether those were the right 25 to begin with.

"You will have thousands, if not hundreds of thousands, of possible hits," he says. "If AI is choosing 25 on your behalf, it's picking a needle from a haystack and answering based on that."

This is where the quality of the underlying research base matters as much as the capability of the model. Fast answers built on a shallow corpus don’t solve the bias problem. They reproduce it, faster.

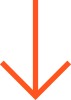

That's the gap LeapSpace is built to close. An AI-assisted workspace that navigates a curated body of peer-reviewed content using natural language, It sits across a vast, curated body of peer-reviewed research, allowing AI to explore a broader field of evidence, not just what surfaces first.

Using natural language, it brings that material together and synthesises it into something usable, while linking every insight back to its source.

In a policy context, that accountability isn't optional.

Fast answers built on a shallow corpus don’t solve the bias problem. They reproduce it, faster.

Better decisions require broader evidence

Dr Thomas Nyman reaches the same conclusion from a different direction.

A lecturer in forensic psychology at the University of Reading, Nyman has been testing how AI handles legal evidence — comparing general-purpose models with more structured approaches using the same trial data.

The difference is consistent. "Specialized knowledge does enhance decision making."

But Nyman is careful about what that means in practice. A single study shouldn't change how decisions are made. "What would be needed for policy change is a lot more research from different avenues," he says — particularly in areas like AI, where it isn't always clear where bias or error might emerge.

That reinforces the same problem Mahfouz identifies. If decisions are built on a narrow slice of research, they carry the same blind spots.

The broader the evidence base, the easier decisions are to defend. And the easier they are to trust.

“What would be needed for policy change is a lot more research from different avenues.”

Dr Thomas Nyman

University of Reading

Trust determines what gets used

If widening the evidence base is the goal, trust becomes the constraint.

Robin Brooker, a research integrity specialist at the University of Reading, starts with something most researchers recognize.

"Most of us don't have the time to read every appendix, audit every data set, rerun analyses," he says. "We have to base our assessments of the trustworthiness of a piece of work on those trust markers."

Peer review. Named authors. Declared conflicts. These aren't bureaucratic conventions — they're what lets researchers, policymakers, and the public judge whether an answer deserves confidence.

AI doesn't change that. It raises the stakes.

"Just like statistical literacy, just like methodological literacy," Brooker says, "AI literacy is hugely important." If researchers can't explain how an answer was produced, that uncertainty doesn't stay contained. It carries through into every decision built on top of it.

At a recent roundtable hosted by the Foundation for Science and Technology and Elsevier in the UK, one participant put it directly:

“From the perspective of public confidence in research and development, trust is central… AI could create risks if it contributes to misinformation or weakens confidence in the quality of research.”

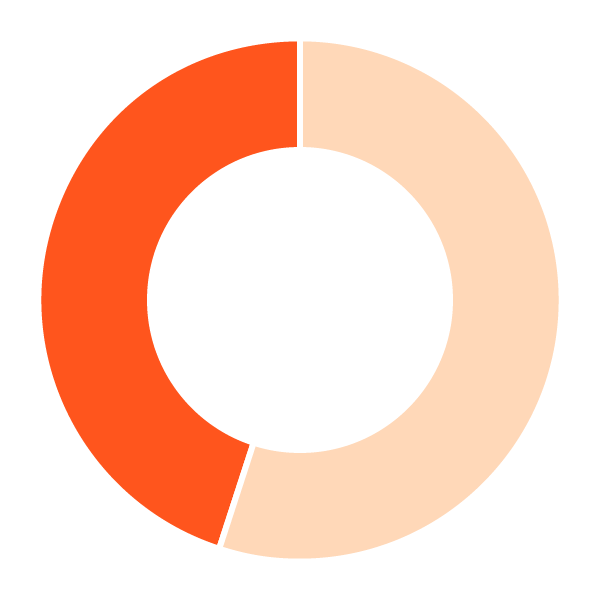

AI use vs confidence gap

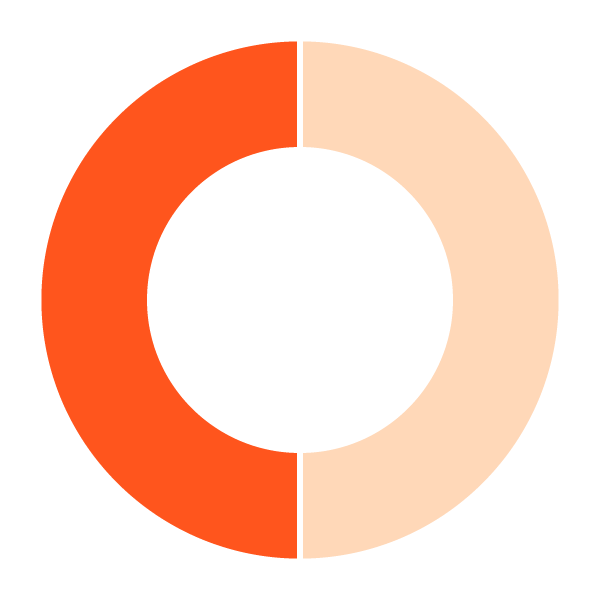

58%

of researchers are already using AI tools

45%

feel undertrained in how to use them

25%

say their institution has strong governance

The future changes when more of the right research is seen

If AI is grounded in trusted, curated content — designed to surface what's genuinely relevant rather than merely familiar — the effect reaches far beyond any single workflow.

More researchers see their work shape decisions. More policymakers act from a fuller picture. And public choices get made on science that is genuinely the best available.

The answers are already out there.

They just need to be read.

Explore Confidence in Research